Read the Series

- ► SEO Part 1: What is SEO?

- SEO Part 2: On-Site SEO

- SEO Part 3: Off-Site SEO

- SEO Part 4: Local SEO

SEO Brief Overview

One of the hardest questions for me to answer when talking to a business owner is, “What is SEO?” or “What does SEO include?”

Let’s get the first thing out of the way, “What does SEO stand for?” It stands for “Search Engine Optimization” and it exists for the purpose of optimizing your website and domain to rank higher on search engine results pages (SERPs) like Google, Bing, and Yahoo!.

The question isn’t hard because I’m not sure what exactly SEO includes. The question is hard because there is so much that SEO includes and effects in terms of your business’ online presence.

The question is even harder to explain when the client doesn’t understand the importance of having a website versus just a Facebook page.

See: “Why Do I Need a Website? Here Are 13 Reasons Why“

This is why I’m creating this 4-part series on what exactly SEO is and everything it includes, from its history to on-site SEO and off-site SEO.

Jump to Section

An Overview of SEO

Any digital marketer will tell you that SEO is the foundation for your entire digital marketing presence. From building your website, to running Google ads, to even paying for social media advertising, this is the method that is going to put you on the radar.

SEO is the optimization of your website for search engines like Google, Bing, Yahoo!, etc. The practice exists to help your site rank in the top spots and be seen by your customers over your competitors’ sites.

But it isn’t just a bunch of speculation and non-FDA approved herbal remedy solutions. SEO tactics are proven methods that search engines like Google have even confirmed to influence rankings.

When you consider 94% of all US adults use search engines and 74% of those use them to research products and services, you’ll understand how important your search engine optimization is for your business.

If you’re looking for a general snapshot of what SEO is without really diving into and reading everything I have to say, then take a look at the Periodic Table of SEO factors the team at Search Engine Journal came up with:

A Simple Analogy

Your website is like your digital store or location. Imagine someone finds your store through advertisements elsewhere and has high expectations. They enter your building (website) and see that the floor is dirty, you’re out of stock on products, and your sales staff doesn’t know anything.

Now, this isn’t exactly good for the place that referred that customer to you. So that place that referred the customer to you is going to start vetting places to refer to so they don’t send people to bad businesses.

This is SEO.

Just like a store, SEO needs to be maintained routinely. Traffic patterns need to be reviewed, the quality of the content needs to be updated and changed to conform to the market search patterns and requests, and your site needs to conform to new search engine algorithm updates.

But first, in order to understand the reasoning for a lot of SEO best practices today, it’s important to go back in time a little bit and understand why us SEOs do things the way we do them now.

A Brief History of SEO

1991 – 2002

On the fateful day of August 6th, 1991, Tim Berners-Lee created and launched the world’s first website. And unbelievably, it’s still running today!

Soon after, creating websites was becoming more and more popular. There needed to be a way to catalog all these websites so people could find what they were looking for. Then enters Excite. It was the first search engine to revolutionize the way the world wide web worked and cataloged sites by keywords found in the content of each site as well as the back end optimization.

Shortly after, Yahoo! (1994) and Google (1997) entered the scene, improving the way data was indexed and delivered and dominating the search engine market.

It’s important to note that Google took the lead on the playing field with their PageRank algorithm in 1996. The algorithm was designed to rank websites better that had more links coming to them, almost like a popularity contest.

But of course, people find ways to exploit the beginnings of something revolutionary. Now enter ‘keyword stuffing’ and ‘link schemes’. Keyword stuffing became a popular technique that was exactly what it sounded like. It was packing as many keywords into the content as you possibly can to mislead search engines in thinking your site is more relevant based on the number of keywords you have.

Link schemes were developed to exploit the PageRank algorithm. Since there was no check in place to monitor the quality of the links linking to a website, marketers would often set up dozens or even hundreds of fake websites for the sole purpose of linking to their main website, or create websites that were essentially link farms where people would pay to have their link on that site.

2003 – 2005

With unethical search engine exploitation practices, Google led the initiative in cracking down on unethical search engine practices. This even included penalizing sites for keyword stuffing and bad link practices and created the foundation for a more user-based and focused web.

2006 – 2009

Prior to 2006, search engines were very basic and focused primarily on delivering results from specific pages. Once we learned more about search patterns, Google developed its Universal Search. This is closer to what you see in search engines now with links to news, videos, images, etc.

In 2008 Google went all out. They developed tools like Google Trends and Google Analytics for marketers as well as displaying suggested search queries based off of historical data.

This era was all about ushering in better user experience for people using search engines to find what they were looking for.

2010 – 2012

We are now 20 years into the development of websites and the internet is packed full of them, from low quality, dummy sites to high-quality sites with huge authority.

Finding the right content you were looking for was becoming a task. This caused Google to make huge algorithm updates, two of them specifically that still shape the way we perform SEO.

Panda Update (2011)

The Panda update focused on preventing poor-quality content from making its way into the top search results of Google. This may sound like something they already took care of in 2003, but this update went all out.

It didn’t just focus on keyword stuffing. It focused on the quality of sentence structure, the varying keywords used and much more. You can check out a list of everything the Panda update affected here.

The Penguin Update (2012)

This update focused on the sites creating bad link schemes by buying links or even linking to and from unrelated sites. Yes, now sites you link to and sites that link to you need to be related to you somehow by industry or content. But of course, like Panda, there’s much more to the update which you can read here.

This update also leads to the term ‘quality’ before the term ‘backlinks’.

Related: “How to Gain more Quality Backlinks“

2013 – Present

Today, Google updates their algorithm almost every day and uses over 200 ranking signals when determining which sites get to show up first, which makes SEO a constant battle to be at the top of search engine results pages.

Search engines are always trying to improve their user experience and in doing this, they’re providing better and better insights to marketers to help them provide better quality content for their searchers and potential customers.

The catch? Google doesn’t tell us what the algorithm is or what they changed. Anything and everything is speculation based on observed fluctuations in reporting data by third-party agencies and hints here and there from conferences held by Google. Fortunately, we know the ranking factors, just not exactly how much they all influence ranking signals.

On-Site SEO

*Phew* Okay back to the present day.

The first thing most people think of when hearing about SEO is on-site optimization, meaning, changes made on your site to optimize your presence in search engine rankings. But this is just one part of SEO.

On-site SEO is an on-page analysis by search engines that determine the quality of your website pages individually.

Search Engines take into account various things like:

On-Site Data

- Header tags (H1, H2, H3, etc)

- Keywords

- Quality of content

- Internal linking structure

- Page speed and load time

- Alt text on images

The main reason search engines take into account on-site data like this is obvious. They want to make sure your site is credible in terms of content and housekeeping. If Google sees you’re keeping your site well maintained and your content up-to-date, they’re going to trust you more, which is why they, as well as third parties, have created measures to deliver this “housekeeping” information to search engines.

The big thing here is making sure all of your pages are optimized as well as optimizing your images.

Blogging is usually the best way to keep your content up-to-date and establish credibility with Google. Check out why your landscaping and lawn care business website needs a blog.

On-Site Visitor Metrics

- Bounce rate

- Time on site

- Direct traffic

- Pages per session

- Conversion rate

- And much more

We get the on-site data as a ranking factor, but why user behavior metrics? Well, like any referral source, they want to provide a good experience to the end user. If you don’t offer pest control services but you know a couple of people who do, are you more likely to refer that customer to someone that looks professional but does bad work, or someone that may not have the fancy truck but does excellent work and takes care of their customers?

Google wants to make sure it’s only providing the best quality search results. This means when the search engine starts collecting data on user behavior metrics for your site, they’re going to start using it in the ranking algorithm.

They want to make sure people are coming to your site and staying for a while. They don’t want them ‘bouncing’ (leaving before taking any interaction) and they want users to be engaged in your content (time on site metrics).

This is where technical SEO meets User Experience (UX) and quality content and information on your site.

Like I said earlier, there are over 200 ranking signals and these are just some of them. But you can see how search engines like Google have come a long way from simply reviewing the keyword content and the number of links coming to the site.

Off-Site SEO

Off-site SEO is the type of SEO that often gets forgotten about by business owners and even (amazingly) by some marketing agencies and freelancers.

The problem with that is that off-site SEO arguably holds all the top keys to ranking your website at the top of Google.

Off-site SEO is an off-page analysis of the internet to determine the authority of your website linked to or referenced by other domains and networks.

Remember those links coming to your site as a ranking signal? That’s primarily what this is and consists of things like:

- Backlinks

- Directory citations

- Review management

Domain Authority

If you’ve researched SEO even a little then you’ve probably heard of Domain Authority (DA). Some agencies will tell you that this is a metric Google uses to determine the authority of your website. This, however, is not true.

Google does not use Domain Authority as a site-wide ranking signal, although they do have their domain-wide ranking signals, they primarily view the quality and ranking signals of each individual page based on those 200 ranking signals I mentioned earlier.

The term and metric ‘Domain Authority’ comes from the 3rd party Moz. Moz developed this metric as a fairly accurate assumption of your site’s ranking performance when the quality of backlinks to your site is taken into consideration.

It’s a score from 1-100. Where Facebook is 100, and an established site can be anywhere between 40 and up.

The biggest flaw with this metric is that it isn’t always accurate because Moz’ crawlers aren’t as efficient as Google’s. A site could show a DA of 1 but it could be closer to 20 or 30 but the Moz crawlers just haven’t reached the site yet.

If you don’t want to take my word for it, you can check out what the ‘real’ experts have to say on it for yourself. Moz – “What is Domain Authority”.

Additionally, if you want to see what the DA of your own site is, just paste it into Moz’ free Open Site Explorer tool. You can also simply Google “DA checker” and get results for an abundant amount of third party DA checkers.

Check out our top 10 tips for picking the best domain for your business.

Backlinks

Backlinks and directory citations are two of the biggest contributing factors to establishing credibility with search engines.

You see, backlinking is like networking in the digital world. Which do you think is better?

- Networking with a bunch of small businesses with a small presence

- Or networking with a few huge business names and social authorities?

Probably the latter. If you thought the first, then you’re thinking in terms of the old PageRank algorithm. The quality of links far outweighs the quantity.

For more on quality backlinks, check out “How to Gain More Quality Backlinks“.

Directory Citations

Directory citations can be a little more confusing. The more relevant citations you have, the more credible you become in the eyes of search engines.

When you have your NAP (Name, Address, Phone) information located somewhere on the web, Google can correlate that to the NAP information on your site and strengthen your authority. As a bonus, you can even get some quality backlinks from certain directories without paying.

Of course, there is a lot more to SEO, these are just the hot button items. If you want to know more about off-site SEO check out “SEO Part 3: What is Off-Site SEO?“

Local SEO

You’ve heard of on-site and off-site SEO, but how much did you know about local SEO?

It really isn’t that different from the first two. It’s more of a combination of factors from both on-site and off-site but there is a specific strategy that separates it from the other two types.

Local SEO is the optimization of your website’s local presence digitally.

What do I mean by “local presence”?

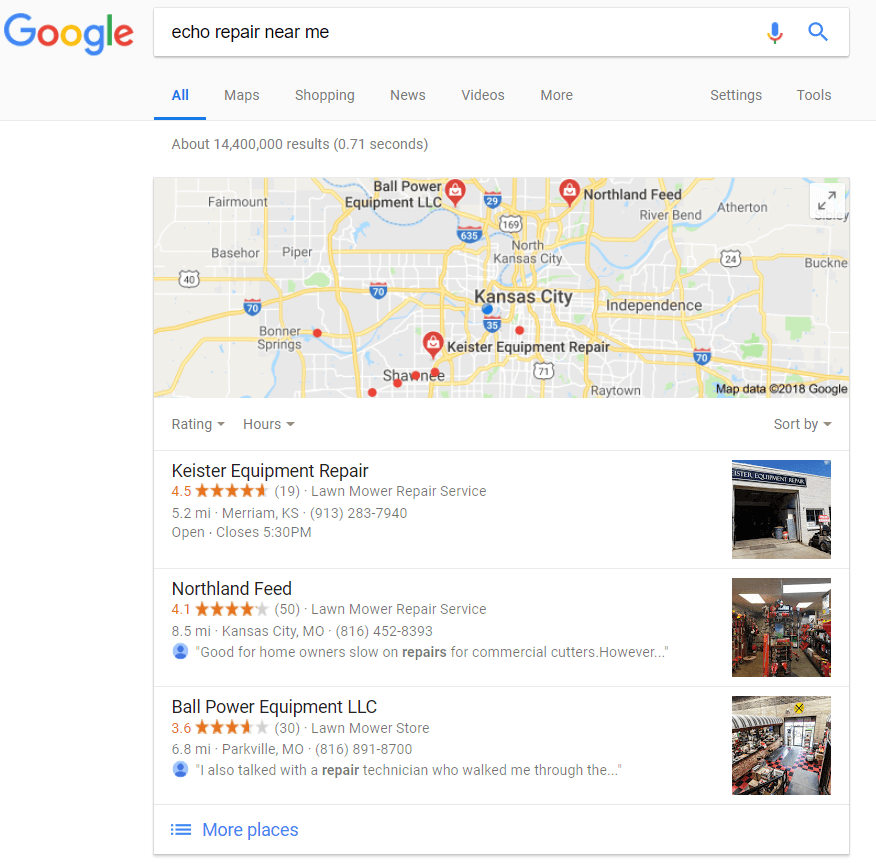

Think about the last time you typed something in Google that you needed to locate near you, like “Echo repair near me” or “Sod supplier (insert city name)”.

You might have seen something like this in Google.

Those three results are called the local 3-pack and it’s what Google deems the most relevant based on my keywords and physical location at the time of the search.

When a customer is looking for a lawn care service provider or landscaper near them, it is absolutely paramount to show up in this listing.

However, just because you’re not the closest to the searcher doesn’t mean you won’t show up here. Take for example Reynolds Lawn & Leisure, they showed up at number 7 on the list (I had to click on “More Places”).

Reynolds is listed as a Lawn Mower Repair Service and is located closer to me than Northland Feed, who appeared at the number 2 spot.

So what does this mean?

It means Reynold’s needs some local SEO work. They may rank higher for other key terms related to the SEO of their site, but when factors are taken into account regarding a customer wanting to find something near them, then the algorithm changes a bit.

Things that influence these rankings are:

- Quality and volume of Google reviews

- Local NAP citations (See off-site SEO)

- The category of your business

- Optimization of your Google My Business listing

- Location-specific content on your site

- and of course… much more

But yet again, this is just the tip of the iceberg. I want to keep your interest piqued in all facets of SEO so I don’t want to keep dragging this post on.

If you’re looking for a more in-depth review and analysis of what exactly Local SEO entails, check out “SEO Part 4: What is Local SEO?“

Want More?

If you haven’t guessed from the title of the post as well as the hints to following articles in this series, then know that this is just the first step to learning what SEO is all about.

The primary purpose of this series is to provide 100% transparency between us at Evergrow Marketing and our current and future partners as well as educating on why SEO is so crucial for your business.

It’s too easy for marketing agencies and freelancers to tell you they are working on your SEO and take advantage of your lack of technical knowledge. We aim to clear up those blurred lines between what SEO is and what you’re really paying for.

If you have more questions, leave a comment below!

0 Comments

Trackbacks/Pingbacks